SEO is something that most companies prefer to acknowledge in a roundabout fashion. They take it into account when creating content or designs, but otherwise, consider it a second-string priority. To some extent, this is because so many things factor into SEO that making it a focus without a careful plan is a recipe for scope creep and wasted effort.

But it’s important to run a comprehensive SEO audit from time to time (no matter the size of your business overall) if you rely significantly on organic traffic. Otherwise, you may well end up spending time and money on new campaigns that ultimately prove ineffective because your website has some basic underlying technical issues.

Let’s take a look at what a comprehensive SEO audit has to include so you can simply check things off when yours gets underway

Confirming indexed pages

The very first thing you need to check in an SEO audit is how many pages of the site are currently indexed. You can achieve this in various ways, but the simplest is to carry out a Google search using the inurl: parameter with your basic domain name. Otherwise, venture into Google Search Console to check your Index Coverage Status report.

If you want a page to rank but it isn’t even being indexed, that’s a problem to address immediately. You might need more internal links, a sitemap, or a revised robots.txt file. There may also be an issue with duplication, but we’ll get to that later. Form a list of all indexed pages and move ahead.

Reviewing analytics

Before you continue with the audit, it will be useful to get an idea of how the site has been performing by heading into Google Analytics (or whichever analytics suite you have configured). Generally browse the information, looking for notable spikes or dips in performance as well as any metrics that stand out. Which pages have been receiving the most views and retaining attention most effectively? Does anything massively surprise you?

Knowing which pages are proving most significant to the site’s overall performance, you can direct your attention accordingly throughout the remaining steps. This is particularly important if you’re dealing with a massive site with hundreds or even thousands of pages, because you’re very unlikely to have the time and resources needed to take an in-depth look at each one.

Checking canonicals

Each important page needs to have a distinct identity to avoid dividing and weakening search traffic, and this is accomplished in cases of similar content through canonical tags. The canonical tag points to the primary (canonical) version of a page and tells search crawlers that any other page sharing its content should defer to it in rankings.

Canonicals are also important for handling URL variations for homepages. A website could be www.samplesite.co.uk, or samplesite.co.uk, or https://www.samplesite.co.uk, or one of various other configurations. Each page should default to one version, the canonical URL, with all the others sent to it through 301 redirects. Fail to set that up and you could have your www and non-www URLs competing for the same rankings.

Checking URL structure

URL structure is significant for people and crawlers alike, because it provides essential context about the internal framework of a website. A solid URL structure will be readable and logical, making it clear where the current page stands in relation to all the others. The typical website will use whichever URL structure is default to the CMS. Just about any modern CMS will use a structure suitable for SEO, but a site that has been around for a while might have something more convoluted.

If your URLs aren’t including relevant keywords and clearly nesting categories (an individual toaster on an e-commerce site should be at something like /products/toasters/toaster1.html, and not simply a seemingly-random string), then look for a way to update the URL presets, and make manual changes if needed.

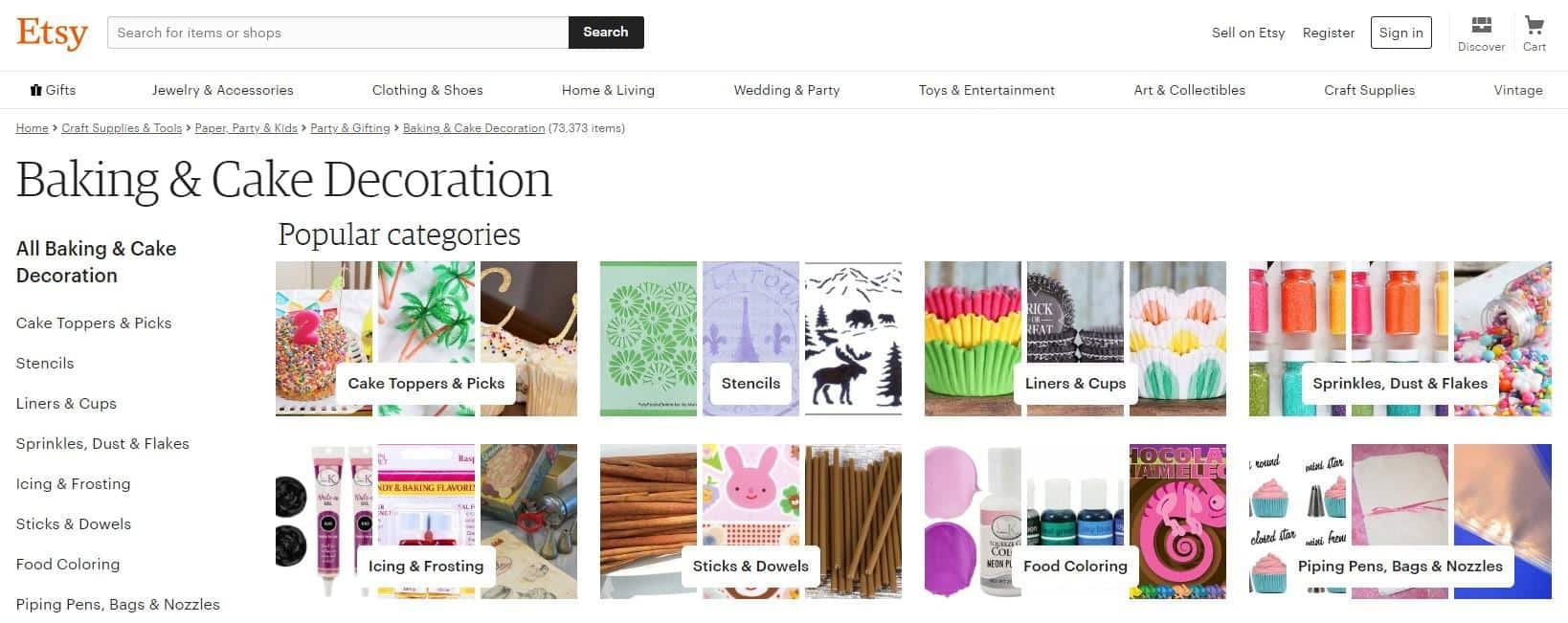

For a good example of how to implement a URL structure for numerous listings, take a look at the Exchange marketplace: each store category has a neat and easy-to-parse URL that also works well for SEO (the internal links help, too). Another good example is Etsy, the marketplace for creative crafting work: the URL for the page in the screenshot below is as follows:

https://www.etsy.com/c/craft-supplies-and-tools/paper-party-and-kids/party-and-gifting/baking-and-cake-decoration?ref=catnav-10983

This URL isn’t 100% readable, of course, but that’s not surprising from a site with complex product filtering. But take a look at how the URL mirrors the breadcrumb trail provided on the page. The “c” stands for categories, then everything branches in a way that makes total sense.

Testing page speed

Page speed is extremely important, not just because it’s annoying to wait for sites to load (and we’ll often leave sites that are taking too long) but also because Google is moving towards mobile-first indexing — when that happens, page speed will be a ranking factor (and something mention in listings) for search across all devices.

And when I talk of testing page speed here, I don’t just mean running your site through Google PageSpeed Insights and implementing whatever suggestions are provided. I also mean carrying out real-world testing using mediocre smartphones on 2g networks. How slow does your site feel, regardless of what the stats say? Find that out, and only then can you decide if your site requires further optimisation work.

Checking image optimisation

There are various viable file types for online images, and it’s worth spending the time checking how optimised your images are — not only for speed but also for quality. It isn’t unheard of for a site owner to mistakenly store images with transparent backgrounds as JPEGs (which don’t support transparency), use massive uncompressed BMP files for no good reason, or save complex photos as PNGs (leaving them needlessly large).

In general, anything with distinct colours and/or transparency should be stored as a PNG, while anything more complex (such as a photo) should be stored as a JPEG. You should also use SVGs (or other vector formats) for logos and basic graphic elements to trim the bloat and allow them to scale sharply to any size. Every image on a site should be as detailed or as minimal as the context demands.

Reviewing content

Even the most highly-polished service website is unlikely to rank well without expansive content, because that’s the fuel that Google’s crawler needs to draw conclusions. Accordingly, the bulk of the effort for an SEO audit should go to reviewing the site’s content and coming away with ideas for doing more with what’s already there and subsequently expanding upon it.

When reviewing content, think about the target audience. How do they speak? What might they search for? What content do they expect? And beyond that, think about the formatting. To be parsed and interpreted by crawlers and mobile users alike, your content should be sliced into digestive chunks using informative subheadings.

If you have two pages that say essentially the same thing, consider merging them to create a superior page more likely to rank well. If you have a page of extremely thin content, either add to it or remove it entirely so it doesn’t make the rest of your site look bad. You want to build a reputation for consistent quality and become an authority in Google’s estimation.

Checking metadata

Every page that you want to rank needs a clear and informative meta title. The meta title will determine the title of the results listing, provide context for readers, and help the page to rank for the keywords it mentions. And while meta descriptions don’t have such a reliable effect on results listings (Google can use them for the descriptions, but it often chooses not to), they’re worth getting right to make Google more likely to leave them as they are.

Returning briefly to images, you also need to check your alt text. Because search crawlers can’t easily interpret images (yet) and accessibility is a factor, each image should have an alt text description explaining what the image shows. You don’t need to get too complex — just say what’s pictured in very plain and simple terms.

Checking structured data

Structured data allows you to provide a contextual framework for a page of content, isolating certain elements and defining what they mean so they can more easily be interpreted and appropriated by search engines. If you search for a query in Google and get the answer highlighted in a featured snippet at the top of the results page, that’s been extracted from the source website, likely through structured data.

Not every website uses structured data, of course, and not every website needs to (it isn’t currently a ranking factor, and it can be complex to implement), but since it might become a ranking factor in the future and it’s already being used by Google to draw out results, it’s certainly worth including (and getting right) if you have the opportunity.

Checking social connectivity

Among many other things, Google will look to social media activity when gauging how to rank a website, so it isn’t something to be overlooked (even if your traffic is 100% organic and you have little interest in attracting social media followers). To provide a better experience at barely any cost, every site should have social sharing links, allowing a reader to share a page through Twitter, Facebook, Snapchat, etc.

Your audit should manually review the social links (are they adequately prominent?) as well as the analytics data behind them (are they being used?) to determine their current value. If the links aren’t working, or are all but impossible to spot if you don’t already know where they are, then there are some changes to be made.

Analysing backlinks

Link building remains a core part of an SEO strategy, and isn’t going anywhere. Google relies on links from experts and authorities to gauge the relative significance and credibility of unfamiliar sites, after all, so your site’s array of backlinks is likely having a major effect on its rankings.

Not all backlinks are worth having, though. A backlink from a sketchy site with no ranking potential, weak content and (potentially) scammy intent is more likely to sap your rankings or even earn you a penalty by association. Analysing your backlink profile will allow you to find any threatening backlinks and formally disavow them so they can’t damage your rankings — plus it will give you an overall idea of which sites are your most important backers.

Gathering feedback

Beyond all the technical factors that you can assess and the metrics you can review, there are the countless intangibles relating to your UX that affect how long people spend on your site, how they view it, and how likely they are to return. You might think that there’s little wrong with your site, but if you’re not impressing your visitors, you won’t get very far.

A comprehensive SEO audit should gather some in-depth feedback from previous visitors and fresh visitors alike. Ideally, you’ll get some positive and some negative comments from both groups, giving you a better notion of how you can improve upon the weaknesses and further exploit the strengths. You might find that there’s a feature you could easily include that would make a huge difference to your visitors (a live chat function, for instance).

Assessing competitors

A website doesn’t perform in a vacuum. It ranks relative to other sites, and a very decent site can drop from the top spot to the third page for a particular keyword purely as a result of numerous other sites making improvements. Because of this, no SEO audit is complete without taking a close look at relevant competitors.

What does this achieve? Well, it gives you an idea of how they operate. How the write their content, how they design their menus, how they pursue their backlinks, how they interact with their followers. If they’re doing something extremely effective, you can learn from it and do something similar — even trying to one-up them. If they’re making some mistakes, you can further outperform them in those areas to shore up those advantages.

Does this list include everything you can include in an SEO audit? No, because you can always find something else to investigate. But it does include everything you can’t afford to leave out if you want to make an SEO audit worthy of the time investment.

Patrick Foster is a writer and e-commerce expert for Ecommerce Tips. From time to time, he dreams about keywords. Visit the blog, and check out the latest news on Twitter @myecommercetips.